High traffic inevitably brings performance issues. Adding more memory or CPU often provides only temporary relief because the real problem may lie in architectural limitations. To solve this permanently, you have to scale horizontally.

Together with our development team, we compare vertical and horizontal scaling approaches and review best practices to handle increasing demand. As a bonus, we share common scaling mistakes and walk through a real case where we helped scale an e-commerce store to support growing traffic.

Contents

- What is scaling and why it’s important from the start

- Vertical vs horizontal scaling in Rails

- What you need to know before choosing vertical scaling

- Best practices for scaling Rails app

- 5 Rails scaling mistakes to avoid

- RoR infrastructure for scaling: how to avoid difficulties from the start?

- Final words

What is scaling and why it’s important from the start

Scaling is an application’s potential to handle an increasing number of user requests per minute (RPM).

Even if your business is not that big, scaling the application is still the point to consider. The truth is that scaling prepares your product to be flexible and ready for future growth and major technical headaches later.

Why having a scalable application is a priority:

Performance matters

Even if you’re just building a simple blogging platform, performance issues can creep in as your data grows.

For example, the more posts you create, the more power your app needs to manage them efficiently. Once you have 1,000+ posts, querying recent posts by a specific user can start to slow down, especially if proper indexing or query optimization wasn’t implemented from the start.

Unscalable code is harder to fix

If scalability isn’t considered in your early decisions (e.g., database structure, background jobs, caching), you might have to rework large parts of your app later. That means more time, higher costs, and slower development when it really matters.

User growth can spike at any time

You launch your MVP with just a few users. One day, a tweet from an influencer brings in 10,000 visitors in an hour. Your app crashes under the load because it wasn’t optimized to handle that kind of spike.

Data complexity grows with features

As your app evolves, you’ll likely introduce more features like comments, likes, tags, notifications, etc. Each new feature adds more data relationships and query complexity. Without scalable patterns like eager loading, pagination, or indexing, things can get slow quite quickly.

Downtime hurts trust

If your app slows down or crashes during peak usage, users lose confidence. Worse, they might not come back. Scalability brings a lot of reliability, which is vitally important for your brand’s reputation.

Scaling a Ruby on Rails application is more than the ability to handle large amounts of users. By having the right infrastructure, you get a stronger and well-performing application that works efficiently and is ready for sudden growth.

With over 12 years of providing RoR services, we’ve learned what works, and what doesn’t. That’s why over 50 clients have left us a 5.0 rating on Clutch.

Vertical vs horizontal scaling in Rails

There are two main ways to approach scaling: vertical and horizontal. The former adds resources to a single server; the latter adds more servers to the system. Let’s quickly break down what each of these means.

Vertical scaling

Vertical scaling means improving your existing server by giving it more resources, like adding more RAM, a faster CPU, or extra disk storage. You’re still running your application on one single server, but that server becomes stronger and faster.

For example, your Rails app is on a server with 4 GB of RAM and starts slowing down. You fix it by upgrading to 16 GB of RAM and a better processor.

Vertical scaling is great for when you’re just starting to see increased user traffic. It’s easy, straightforward, and doesn’t usually require changes to your code.

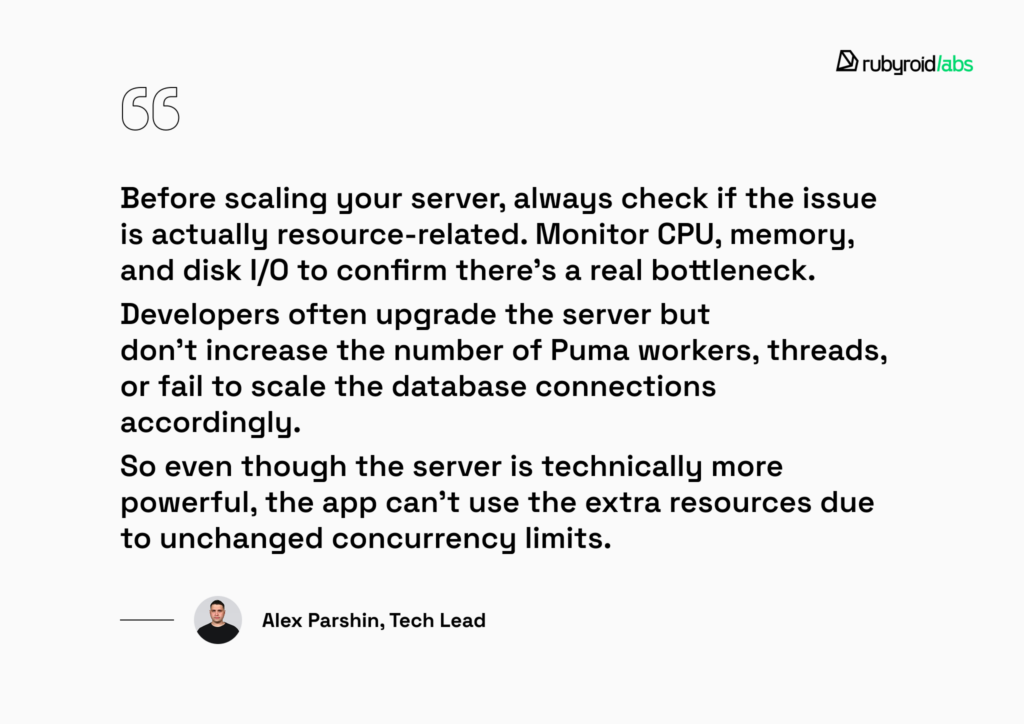

But here’s an important tip:

| Before you rush to buy more server resources, double-check what’s actually causing the slowdown. Sometimes, performance issues are due to inefficient database queries (like N+1 queries), memory leaks, or blocked input/output operations, not necessarily a lack of server resources. |

To verify if your server is genuinely overloaded, you can use simple monitoring tools like top or htop. These tools give you real-time insights into your CPU and memory usage, helping you quickly identify the real issue.

Nuances in this strategy:

- There’s a physical limit (you can’t keep adding resources forever).

- It can get expensive pretty quickly, as powerful hardware tends to cost more.

- If the server breaks, the whole app goes down.

Horizontal scaling

Horizontal scaling means running your Rails app on several machines simultaneously.

For example, you could deploy your Rails application on three separate servers and put a load balancer in front. This balancer efficiently distributes incoming traffic among your servers, ensuring no single machine gets overwhelmed.

The horizontal method of scaling is usually the best way to scale a Ruby on Rails product. It makes your application much more resilient. If one server has an issue, your users barely notice anything went wrong.

But, of course, horizontal scaling isn’t without its challenges:

- It has a more complex setup.

- You may need to make your app stateless.

- Requires infrastructure knowledge (load balancers, auto-scaling, etc.).

One traditional hurdle for horizontal scaling was WebSockets (Action Cable). Starting with Rails 8, Solid Cable lets you use your database instead. Now you can scale WebSockets across servers without the extra Redis overhead.

| Feature | Vertical scaling | Horizontal scaling |

| Core concept | Adding more power (RAM, CPU, Disk) to an existing single server. | Adding more machines to work together as a single system. |

| Ease of use | High. Straightforward and usually requires no code changes. | Low. Complex setup; requires infrastructure knowledge. |

| Resilience | Low. If the server fails, the entire application goes down. | High. If one server fails, the others keep the application running. |

| Limitations | Has a physical hardware ceiling and can become very expensive. | Requires the app to be stateless. |

Combining both methods is a balanced and cost-effective way to maintain good performance and reliability without jumping into expensive, complicated architecture changes too soon.

What you need to know before choosing vertical scaling

Most product owners start with vertical scaling because it’s the simplest and fastest way to give an overloaded server some breathing room as usage grows.

Adding more RAM, more CPU, and optimizing things like thread or worker counts can be very effective in certain scenarios.

1. Puma (your app server)

If you’re using Puma (and most Rails apps are), vertical scaling can help you handle more requests in parallel by increasing:

- Threads. Ideal for apps waiting on I/O (external APIs or DB queries). More threads = more concurrent requests.

- Workers. These are separate child processes. Using workers allows you to leverage Copy on Write (CoW). When Puma forks a worker, it shares memory with the master process until a change is needed. This significantly reduces the total RAM footprint.

| A good starting formula for workers is Number of CPU Cores * 1.5. To speed up server spin‑up during auto‑scaling, use the bootsnap gem to cache boot sequences. |

# config/puma.rb

workers (ENV.fetch("WEB_CONCURRENCY") { 4 }) # Use the CoW advantage

threads_count = ENV.fetch("RAILS_MAX_THREADS") { 5 }

threads threads_count, threads_count

preload_app! # Essential for Copy on WriteWhen it helps:

- Your CPU and RAM aren’t maxed out yet.

- You notice long queue times or slow request handling.

2. Database (PostgreSQL or MySQL)

More RAM can significantly help your database performance, especially if:

- Your queries are hitting the disk too often (slow reads).

- The database cache isn’t big enough to hold your tables or indexes.

- You aim to handle more simultaneous connections.

But be careful, as each Puma worker holds its own connection pool.

So if you have 4 workers and each can spin up 16 threads, that’s potentially 64 database connections. PostgreSQL, for example, defaults to around 100 max connections. You don’t want to hit that limit unintentionally.

3. Sidekiq (or other background workers)

Vertical scaling helps here too but again, only in the right situations. Adding more threads or processes in Sidekiq is useful if:

- Your job queue is backed up and not processing tasks fast enough.

- Your jobs are lightweight in terms of CPU and mostly wait on IO (like sending emails, calling APIs, etc.).

What can go wrong here?

Vertical scaling sounds simple, but it can introduce new problems if you’re not careful. Here are a few common pitfalls:

- Out of memory (OOM) errors. Adding too many workers or threads can quickly eat up your server’s memory, which leads to crashes or service restarts.

- Database connection contention. Every Puma thread requires its own database connection. 5 servers x 20 threads = 100 persistent connections. The risk: you will hit your database’s hard limit, resulting in:

PG::ConnectionTimeout.To solve this at scale, use PgBouncer. It sits between your Rails app and your Postgres database, acting as a connection pooler that allows thousands of app threads to share a much smaller number of actual database connections efficiently.

- Multithreading + ActiveRecord = risky business. Rails isn’t always great at handling race conditions, especially if your code relies on shared state. Be extra cautious when going multithreaded.

So, wrapping up vertical scaling: increasing RAM and the number of threads or workers can truly help, but only if:

If your app is slow due to things like inefficient SQL queries, N+1 issues, or poor architecture, then simply throwing more hardware at it won’t solve the problem.

In this case, as a Rails development company, we advise doing vertical scaling together with horizontal scaling. This way, you’re making your servers more powerful and your entire system more resilient and future-proof.

Mixing vertical and horizontal scaling: how this approach works in practice

When helping the Yelz online store manage growing traffic, we faced slower load times during peak hours. Using monitoring tools to verify CPU and RAM usage, we confirmed the server was nearing its physical limits.

We began with vertical scaling. The existing infrastructure still had headroom, so we increased the server’s RAM and adjusted Puma to allow more workers and threads. To keep the memory footprint low, we utilized Puma’s preload_app! to take advantage of Copy on Write (CoW). Since each Puma worker maintains its own database connection pool, we calculated and tuned the database pool settings to ensure the increased throughput didn’t exceed the database’s connection limits.

Vertical scaling alone would only delay the next bottleneck, so we introduced horizontal scaling next. Because the application was designed to be stateless, we were able to deploy multiple Puma instances behind a load balancer, distributing incoming traffic across several application processes. This provided the redundancy needed to remain operational even if one instance became overloaded.

This mixed approach proved effective because each method addressed a different constraint. Vertical scaling provided an immediate performance boost with minimal infrastructure changes. Horizontal scaling improved resilience and created headroom for future growth.

Best practices for scaling Rails app

Now, with the help of our RoR development team, we’re sharing some helpful tips and best practices of both types of scaling to keep your Rails app speedy, reliable, and ready to handle growth.

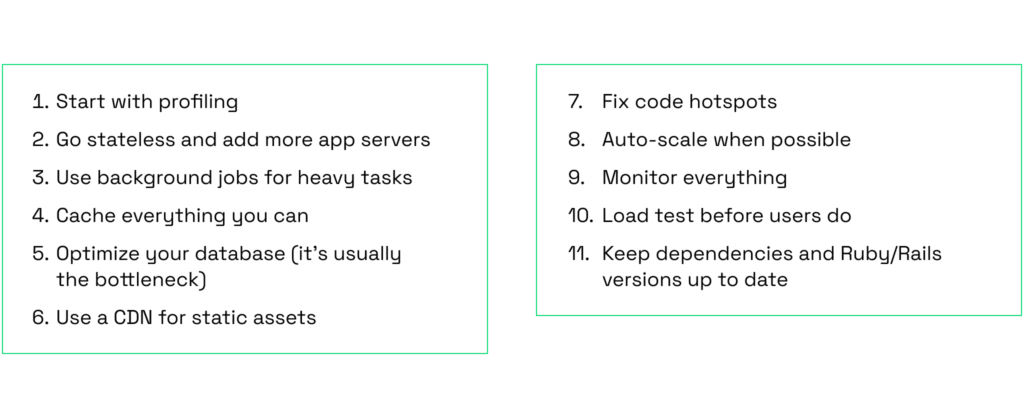

1. Start with profiling

Before scaling, measure first. Use tools like Skylight, rack-mini-profiler, or New Relic to identify bottlenecks.

Start with the usual suspects: N+1 queries, slow database calls, or inefficient rendering.

You can’t fix what you can’t see, so profiling is always step one.

2. Go stateless and add more app servers

Rails apps scale smoothly when they are stateless, meaning any request can be handled by any server. This requires two things:

- Session storage. Store sessions in your database or a centralized store, never on the local server’s disk.

- File storage. Never save user uploads to the server’s local public/uploads folder. Use Active Storage configured with a cloud provider like AWS S3 or Google Cloud Storage. This ensures that if Server A uploads a file, Server B can still serve it to the user.

To protect your stateless cluster from malicious traffic or “noisy neighbors”, implement the rack-attack gem to provide robust rate-limiting and throttling at the entry point of your app.

3. Use background jobs for heavy tasks

Don’t make users wait for long processes like sending emails, generating PDFs, or resizing images. Offload those to background jobs. Sidekiq (Redis-based) remains an industry standard for high-performance concurrency, but Solid Queue is the new modern default in Rails 8.

Solid Queue eliminates the operational overhead of managing a separate Redis instance. It leverages the power of your existing PostgreSQL or MySQL database, which makes your infrastructure simpler and more resilient.

4. Cache everything you can

Caching is your best friend when traffic spikes. Use Rails’ built-in caching or Redis to store rendered views and expensive query results. However, for high-traffic applications with massive datasets, Solid Cache is a better choice.

Solid Cache uses your database to store cache entries. This allows you to have a much larger cache “window” at a fraction of the cost, which means you can keep even older or less-frequent pages cached and snappy.

5. Optimize your database (it’s often the bottleneck)

No matter how much you scale your infrastructure, slow SQL will result in a slow application. To improve performance:

- Add composite indexes. For queries that filter by multiple columns (e.g., WHERE user_id = ? AND status = ?), a single index on both columns is significantly faster than two separate indexes.

- Use specialized gems. Use pghero to identify missing indexes and slow-running queries in real-time. For lightning-fast pagination that doesn’t slow down as your tables grow to millions of rows, swap standard pagination for the pagy gem.

- Eager loading. Continue using Bullet to catch N+1 queries early. If your system is read-heavy, consider database replicas to offload SELECT queries from your primary write database.

If your system mainly retrieves data rather than modifies it, you can create copies (replicas) of your main database. These copies handle read requests while the main database handles writes. This spreads out the workload and makes data retrieval faster since multiple users can fetch data from different copies simultaneously.

6. Use a CDN for static assets

Avoid serving static files like images, JavaScript, or CSS directly from your application server. Instead, offload them to a Content Delivery Network (CDN) such as Cloudflare or Amazon CloudFront. CDNs distribute and cache static content across a global network of edge servers, allowing files to be delivered from locations geographically closer to your users.

When a user requests an asset, the CDN checks for a cached version. If one is available, it delivers the content instantly from the closest edge server, which reduces latency, improves load times, and lightens the demand on your origin server.

Modern CDNs also support cache control headers, giving you fine-grained control over how long assets should be cached and when they should be invalidated or refreshed. This ensures your users always receive the most up-to-date content without sacrificing performance.

7. Fix code hotspots

Use tools like New Relic, Skylight, or Rack Mini Profiler to find slow endpoints or memory-hungry features.

Most of these tools allow you to see how long each controller action takes to respond. Thus, you can focus directly on the parts of your app that are slowing things down.

Even a single poorly written query can severely impact performance under high traffic, so it’s important to catch and fix them early.

8. Auto-scale when possible

If you’re on AWS, Heroku, or a Kubernetes setup, enable auto-scaling so your infrastructure grows (or shrinks) with real-time demand. Thus, you’re not overpaying for idle servers.

9. Monitor everything

Make monitoring a priority. Use tools like Datadog, Prometheus, or even just your Rails logs combined with Logstash.

These tools help you stay aware of your app’s performance, catch errors quickly, and track infrastructure usage in real-time. It’s your early warning system.

10. Load test before users do

Before a big launch or event, try tools like JMeter, k6, or Loader.io to simulate real user traffic and find weak points.

This way, you can identify and fix weak spots in your system ahead of time.

11. Keep dependencies and Ruby/Rails versions up to date

New versions of Ruby and Rails often come with performance improvements. Don’t wait years to upgrade. Staying current makes scaling easier.

Ruby on Rails gives you a strong foundation, but even powerful frameworks need some prep when traffic starts to climb.

Begin with performance fundamentals like implementing background processing, database indexing, and caching strategies. As your needs increase, expand your infrastructure horizontally and build automation systems.

It’s best to hire a skilled Ruby on Rails development team that understands how the Rails codebase behaves, knows what pitfalls to expect, and can address them in a timely manner.

5 Rails scaling mistakes to avoid

In 12 years of working with Ruby on Rails projects, our RoR developers have seen many scaling efforts fail, often due to avoidable, common mistakes made by previous teams.

Let’s walk through some of the most frequent ones.

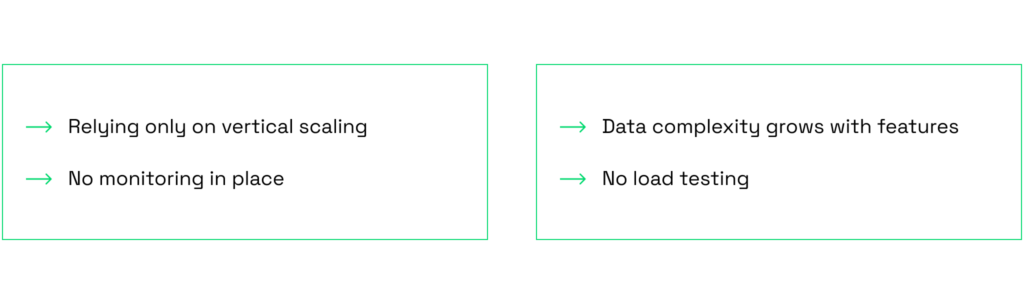

1. Relying only on vertical scaling

Simply increasing server resources (CPU and RAM) without optimizing system architecture is a short-term solution. This approach eventually hits performance limits as user demand increases, leading to inevitable slowdowns.

2. No monitoring in place

Another major mistake is skipping performance monitoring tools to track your app’s server load and potential problems.

An important insight comes from our RoR developer, Vlad Shishko, who notes:

3. Ignoring database health

The database is often the first bottleneck in a growing Rails app, and many developers overlook it.

Skipping indexes, not setting up read replicas, misusing ActiveRecord (think N+1 queries or pulling huge chunks of data into memory), and not managing your connection pool properly are all common mistakes that can drag down performance fast.

4. No load testing

Don’t wait for real traffic to discover problems.

The scaling process can take a lot of effort and might uncover unexpected issues in your code. That’s why it’s so important to trust your app to RoR developers with proven experience who know how to scale it the right way.

RoR infrastructure for scaling: how to avoid difficulties from the start?

Planning your app’s architecture early allows you to develop something robust from the start without running into major issues or having to spend a bigger budget on fixes later. It also makes life way easier for your developers, as it speeds up the scaling process when the time comes.

This is what you can do before starting your product.

- Write modular, maintainable code

Everything should have its place and purpose. Avoid stuffing too much logic into models or controllers. Break things into smaller, reusable parts. This makes your app easier to understand and easier to scale and debug later.

- Keep Domain-Driven Design (DDD)

DDD means building an app around real-world business logic. Instead of forcing all logic into Rails conventions, create meaningful structures that match your domain like organizing features into clear domains or using form objects, value objects, and policies. This makes your app more flexible as it grows.

- Use service objects, jobs, and serializers wisely

Service objects help move heavy logic out of controllers and models into dedicated classes. Keep them focused on one service, one job. Move time-consuming tasks (like emails and API calls) to background jobs using Sidekiq to keep your application responsive and user experience smooth. Serializers (like AMS or fast_jsonapi) help you shape your API responses cleanly. Don’t send more data than needed.

- Use service-based architecture or microservices where it makes sense.

When your app grows, consider extracting some parts into separate services or even lightweight microservices. This lets you scale and deploy them independently, without dragging down the whole app. You can find more about monoliths and microservices in our recent blog post with real-world examples.

These early architectural choices might appear like more effort up front. However, they’re investments that pay off significantly as your application grows.

Start with a solid monolith following these principles, monitor your application’s performance, and let actual usage patterns guide your scaling decisions.

Final words

No matter the size of your business, building an application that can scale is the key to keeping solid performance = delivering high-quality services to your customers and users.

By planning for scalability early, you’re setting your system up to handle the unexpected: traffic spikes, increasing user flows, and the addition of new features. Instead of scrambling to fix performance issues later, you’ll be ready to grow with confidence.

You can scale a Ruby on Rails application in two primary ways:

- Vertically, by enhancing your existing server.

- Horizontally, by spreading the workload across multiple servers.

To do it right, follow best practices that ensure a smooth and efficient scaling process.

When hiring a Ruby on Rails development team, whether you’re building from scratch or scaling an existing app, make sure you’re working with professionals who have proven experience in scaling.